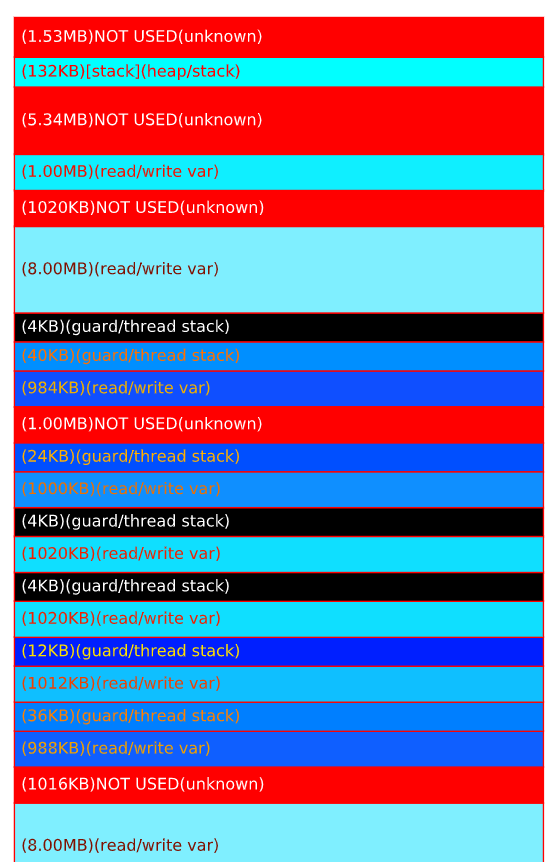

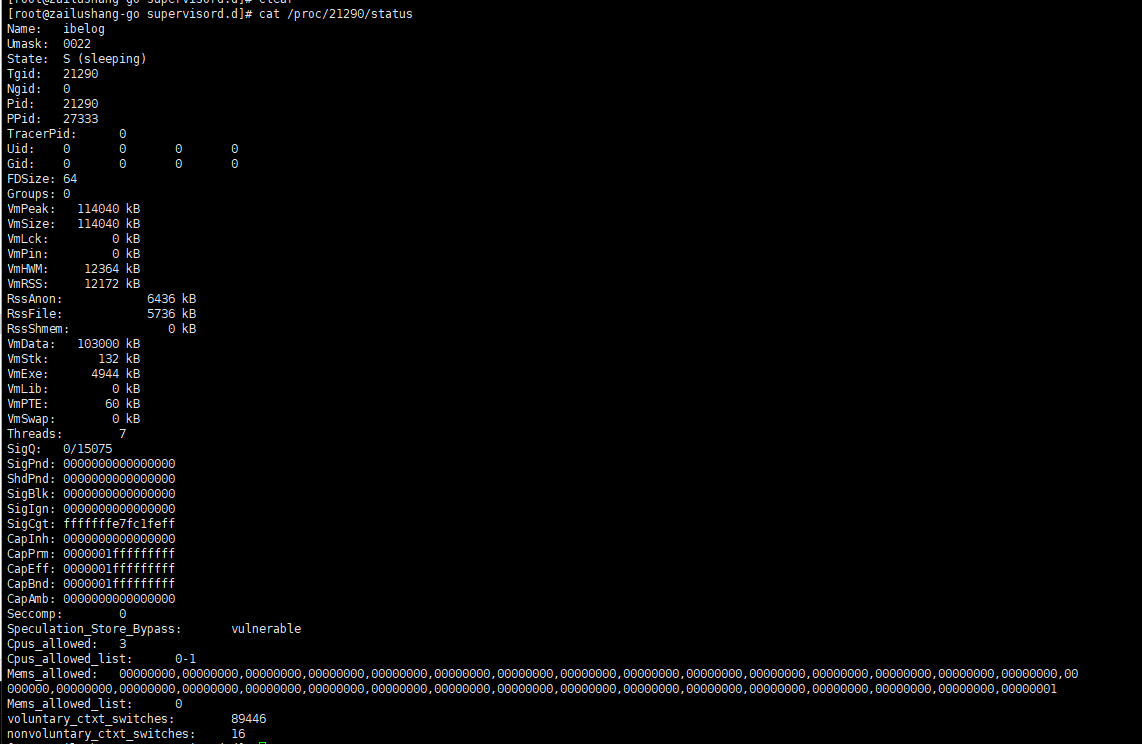

Apparently this is a setting that carries over through vcenter upgrades instead of changing based on the hardware.įor clarity, the setting in vcenter to check out is "VMware EVC". We had to change the setting in vcenter to present a newer generation CPU to virtual machines and then the mobility master booted right up (we're at "Skylake" now). "Penryn" only supports SSE4.1 and the crash logs on the mobility master says it needs SSE4.2. As a result our vcenter was still set to present "Penryn" generation CPUs to all virtual machines. As it turns out, our vcenter server was created many years ago on much older hardware and continually upgraded from there. We were experiencing this same crash/boot loop while trying to upgrade from 8.4.0.4 to 8.5.0.5. This was very helpful in pointing us in the right direction. This is the showstopper regarding my LAB. What you mentioned is not all! Also 8.5 requires INTEL SSE4 Support on the CPU side. This i guess is not an issue in larger ESXi installations, but my single server with sparse also contacted TAC. There seems to be a direct relation b/w socket to NUMA nodes. I guess dpdk is not able to find the socket id 1. When dpdk tries to allocate memory on socket 1 it fails. What I observed was, even though 2 NUMA nodes are assigned, the physical_id for all CPUs under /proc/cpuinfo is assigned to 0. With 8.5.0.0 dpdk, hugetables are equally divided to both NUMA socket. To answer the question of why datapath crash is seen when 2 NUMA nodes are assigned.

In this case only one NUMA node is assigned to the VMM and datapath wont crash. processor : 0 vendorid : GenuineIntel cpu family : 15 model : 2 model name : Intel (R) Xeon (TM) CPU 2.40GHz stepping : 7 cpu MHz : 2392. To override this set under VM advanced option to the number of CPUs allocated to the VMM. On ESXi it was observed that when more than half the CPUs are allocated to a VMM instance then 2 NUMA nodes are assigned to the VMM.

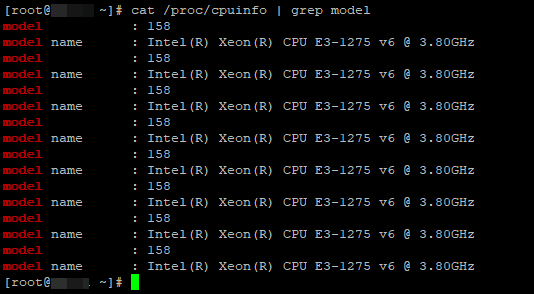

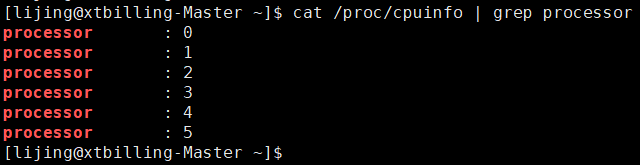

This might be due to CPUs per socket configuration. For example, if the ESXI server has 12 CPUs and VMM instance is allocated >= 6 CPUs. The issue is seen when more than half the number of CPUs on ESXi are allocated to a VMM instance. I have run alle versions from 8.0 up to 8.4.Īs from 8.5 the system is checking NUMA nodes, this is what TAC wrote to me esxcli hardware cpu list will give you the family, model, type, stepping, clock speed, and L2/元 cache sizes. You then typically have command line tools installed in your base OS (Windows or MacOS) that allow seamless management of the Docker containers in the Docker VM.I did contact TAC and got an explaination to as why it will not work on my small ESXi lab setup.

They don’t have a native Linux environment, so they have to run a Linux virtual machine that runs the Docker engine. Now, this gets a bit tricky when you’re talking about Docker in Windows or MacOS. Which means if that what you have happily running on your hardware without hw virtualization support, it will be plenty enough for Docker. Docker needs a 64-bit Linux OS running a modern enough kernel to operate properly. There is also formation about the CPU caches and cache sharing, family, model, bogoMIPS, byte order, and stepping. The information includes, the number of CPUs, threads, cores, sockets, and Non-Uniform Memory Access (NUMA) nodes. Linux namespaces are provided and supported by Linux kernel to allow separation (virtualization) of process ID space (PID numbers), network interfaces, interprocess communication (IPC), mount points and kernel information.Ĭontrol groups in Linux allow accurate resource control: using control groups allows Docker to limit CPU or memory usage for each container. The /proc/cpuinfo file has information about your CPU. Instead, it leverages Linux functionality: namespaces and control groups. The key difference from KVM or VMware virtualization is that Docker is not using hardware virtualization. Docker containers allow you to run processes in isolation from each other and from the base OS – you decide and specify if you want base system to share any resources (IP addresses, TCP ports, directories with files) with any of the containers. In a sense of allowing you to run multiple independent environments on the same physical host, yes. This is a quick post to explain that by default Docker does not need hardware virtualization (VT-X).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed